From Hunch to Hypothesis

Experimentation isn’t a simple “switch” that a brand can flip, but more like a journey that benefits from small steps to create big shifts.

Most brands start with a single test and eventually evolve into growth engines, such as using AI-powered tools, multivariate testing, and more.

But why even evolve from smaller tests to more complex ones? The answer is simple: high-maturity teams that continue to expand their experimentation strategies don’t just “test” – but are more bound to learn, iterate, and drive increased revenue.

In this article, we’ll break down the roadmap from the first win to becoming an industry “blazer.”

What is Experimentation Maturity?

Experimentation maturity refers to how developed an organization’s current strategy is in running, analyzing, and learning from experiments.

This can include their expertise in running things like A/B tests, including its tools, processes, and culture.

Why is Experimentation Maturity Important?

Experimentation maturity is important as stronger expertise allows for more reliable, faster experiments – which can better aid decision-making.

In addition to an increase in data-informed decisions, more developed experimentation strategies also mean brands continuously improve products, outcomes, and reduce overall business risks.

Step 1: Discover – Find Your First Win

In the first stage of experimentation maturity, a single person or small group is still advocating for experimentation. This is better known as the “Ad Hoc Testing Phase”, which is where experimentation is more reactive than proactive.

Tests in this phase are typically:

- Launched on a whim with less strategic planning in mind

- Implement with a hypothesis, but without long-term goals in mind

- Conducted without stable metrics for greater insight

- Using ideas that haven’t been backed by data

- Maybe you are looking for a quick win

During this first step of your brand’s experimentation journey, the platform may be live – but it hasn’t been put to its best use yet. This is similar to someone who has recently purchased a new smart device, but hasn’t set it up to sync with all of their other devices – making their new electronic device less efficient than it could be.

Oftentimes during the discovery phase, there is one “lone champion” trying to fight for greater optimization tactics. This person is challenging those around them to overcome skepticism and testing for the sake of testing, but instead as an effort to create real change.

Goals for this stage:

- Achieve one clear, high-visibility win to prove the ROI of a testing program

- Avoid mistaking excessive testing activity for experimentation maturity

- View it as a stepping stone to ease into the world of optimization

Step 2: Structure – Build Your Blueprint

The second stage of experimentation maturity is when the organization shifts their focus from spontaneous tests to a more reputable, repeatable “rhythm”.

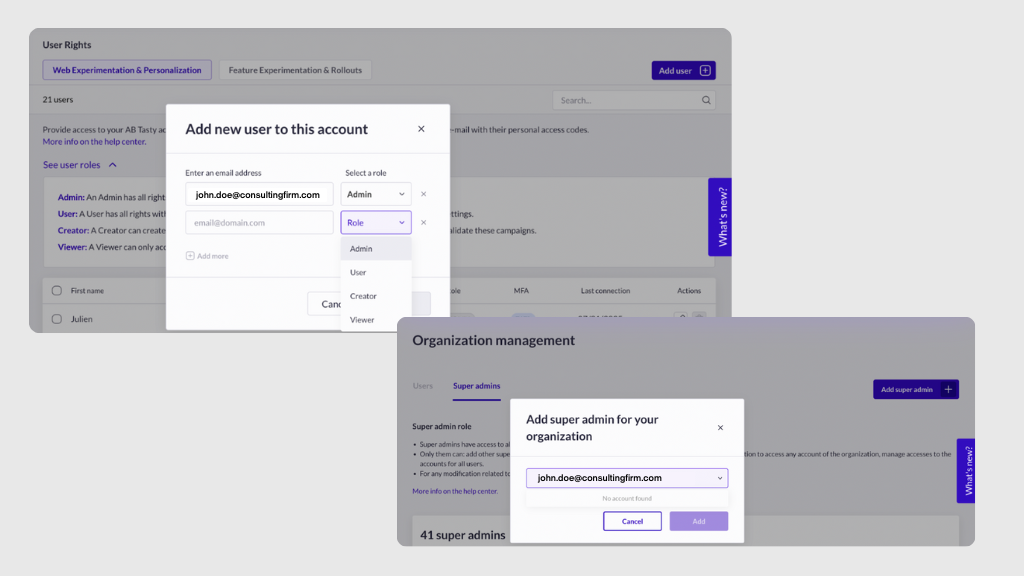

In turn, this is when a more formal optimization team is usually built and more technical tools are established – such as client-side and initial server-side tools.

This step of a brand’s experimentation maturity usually involves:

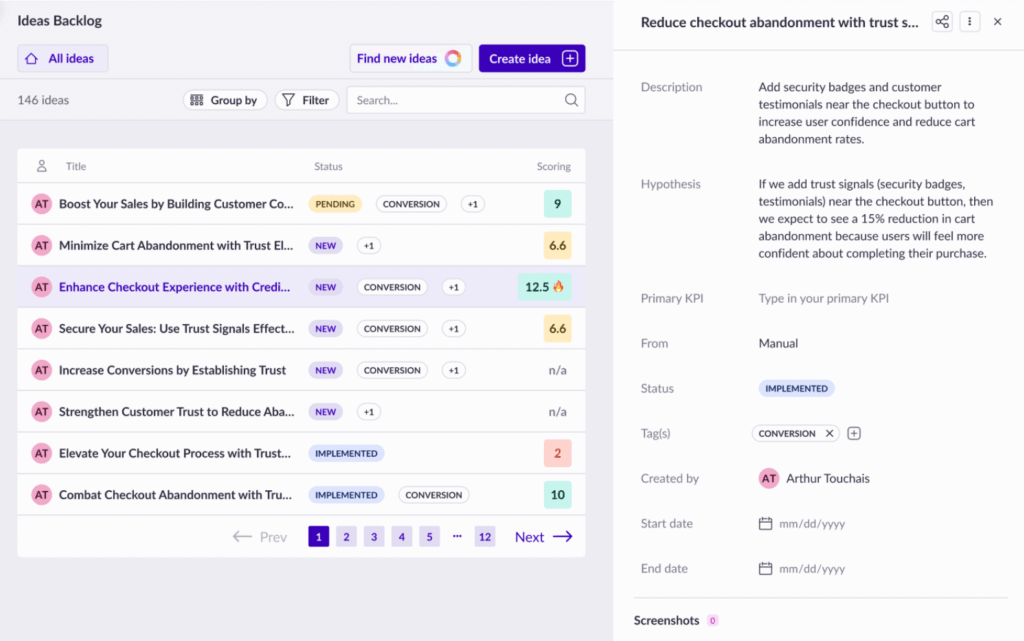

Hypothesis Frameworks

Develop stronger frameworks for creating and validating your experimentation hypotheses.

Defined KPIs

Establish clear primary and secondary Key Performance Indicators to measure success accurately.

Clear Ownership

Define who owns which experiments to ensure accountability and streamline the process.

Starter Roadmap

Create a foundational experimentation roadmap to guide your initial testing efforts and strategy.

Adopted Templates

Ensure templates for experiment design and analysis are widely adopted for consistency.

Team Expansion

Expand usage beyond marketing and CRO teams for company-wide implementation and impact.

This stage of the experimentation journey is where the brand is no longer a complete stranger to the world of optimization, but not yet an expert either.

Goals for this stage:

- Transition from “random tests” to a structured roadmap with prioritized hypotheses

- Treat learnings as a more systematic process that can lead to better tests

- Recognize that experimentation isn’t about singular wins, but creating a reliable formula for continuous improvement.

Bolder Tests to Your Better

We learned more about this with Marianna Stjernvall, who has conducted over 500 A/B tests and understands the value of learning from your failures to accomplish future wins.

With her ample knowledge in CRO, A/B testing, and experimentation – we discover all of the different ways that Marianna came to understand the value in testing, learning, and trying again.

Listen to the full podcast episode here →

Step 3: Accelerate – Team Up. Try Something New.

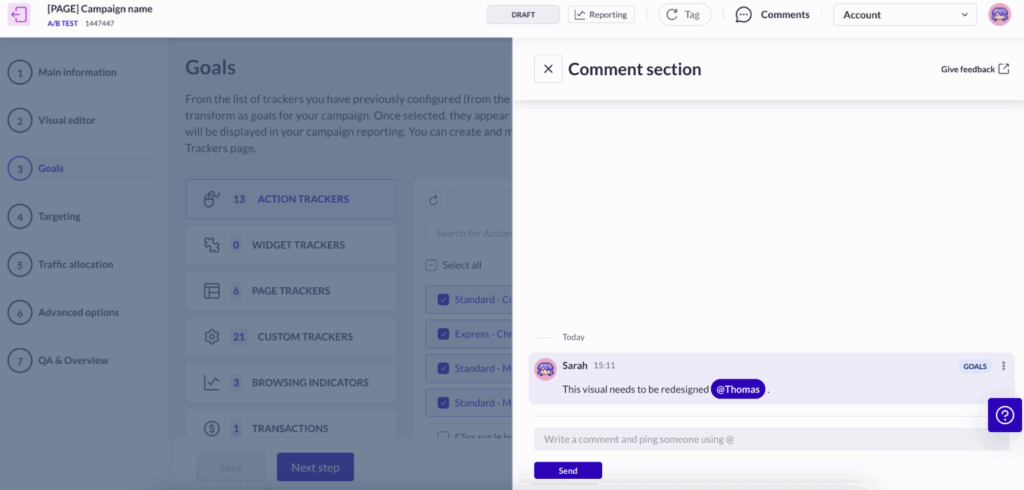

In the third step of a brand’s experimentation journey, they will start to foster stronger cross-team collaboration. This is generally when experimentation will move beyond the marketing department and start to involve Product and UX.

During this phase, an organization becomes more well-versed in cross-team collaboration – ultimately standardizing the practice as they dive deeper into future experiments and testing.

Advancing with AB Tasty

Our work with Pion is the perfect example of teaming up to make progress. Through the use of Feature Experimentation & Rollout, Pion was able to benefit from our server-side platform. This helps brands manage various user experiments before the full release, which can allow for both precision and security throughout the testing process.

Partnering with AB Tasty allowed Pion to:

- Select smarter tests to prioritize experiments that could deliver most valuable information to move forward

- Be bold in their experiments to explore inventive ideas and break routine thinking

- Develop stronger hypotheses to ensure tests conducted would provide meaningful insights

Learn more about Pion’s collaboration with AB Tasty here →

Goals for this stage:

- Improve upon current technical capabilities such as by leveraging more advanced data

- Implement the use of more complex experimentation tactics such as heat maps and user recordings

- Increase testing velocity and finalize a company-wide communication plan for shared learnings

- Ignite interest in using AI and machine learning to develop predictive models and boost personalization

Step 4: Scale – Testing at the Speed of Business

At this stage of the experimentation journey, optimization is no longer a project – but a core business strategy. In turn, this is usually when brands will begin to develop stronger standards, and maybe even experimentation or personalization “playbooks” to establish company-wide guidelines.

Empowering Speed with PUMA

PUMA and AB Tasty teamed up to accomplish this precise goal: personalization at scale that can bring long-term growth.

Together, PUMA was able to empower global personalization through:

- Gender-Based Personalization: PUMA implemented the use of our segment builder to see which products men and women preferred to purchase. This helped with metrics including page views, on-page time, and more.

- Led Data Lead the Way: Through the use of newfound insights, PUMA guided their strategy to become more information-led. This encouraged more effective experimentation that resulted in increased revenue long-term.

- Teamwork Makes the Dreamwork: Experimentation isn’t just for CRO teams, but can be used as a brand-wide method to boost optimization. PUMA learned this with AB Tasty, now fostering a company-wide culture where everyone can contribute ideas for new tests.

Read the full case study with PUMA here →

Hallmark characteristics for teams progressing to this stage of experimentation maturity include developing a Center of Excellence (CoE) model and an overall more robust team structure for optimization.

Goals for this stage:

- Measuring KPIs and additional success metrics to ensure they are aligned with top-level business goals (Revenue per Visitor, CLV, etc.)

- Encourage all departments to not only come up with ideas for tests, but to conduct them safely and independently at scale

Step 5: Blaze – Dare to Go Further

In the highest level of maturity, brands become experts in experimentation – recognizing that this is a pivotal growth and product strategy.

Once this last stage of experimentation maturity has been achieved, every employee can be considered an experimenter. This is when the “HiPPO” (Highest Paid Person’s Opinion) is replaced by data, and choices can be made from insight-led statistics as opposed to emotionally driven business decisions.

Goals for this stage:

- A cultural shift where failure is viewed as a high-value learning opportunity to test and try again rather than a loss

- Continuous and automated optimization that represents the organization’s DNA

- Dedication toward long-term development of innovative ideas and pursuing bold experimentation

Conclusion: Your Journey to Better

Every stage of experimentation maturity is a stepping stone to finding your better.

No matter where your brand currently stands on their optimization roadmap, we believe that your next big insight is just one test away. Small steps can create big change, and it all starts with curiosity calling your company’s name.

Want to see your brand progress through the steps of experimentation maturity, together?

FAQs

Still have questions about experimentation maturity? Here are the answers you need.

What is Experimentation Maturity?

Experimentation maturity refers to measuring how effectively your organization uses testing to make decisions and encourage company growth. This is usually determined by the level of your various processes, tools, and culture for running experiments consistently and at scale.

How can I know which stage of experimentation maturity my company is at?

You can determine how mature your brand’s experimentation process is by looking at how structured your testing process is, how many teams contribute, and how you use data to make decisions. Comparing these factors to industry benchmarks can help you identify your current stage in experimentation maturity.

How can my company boost its Experimentation Maturity?

Investing in training, tools, and clear processes can enable teams to run more tests confidently. This can also encourage collaboration across departments and use insights from experiments to guide strategic decisions, which also helps your brand progress through the stages of experimentation maturity.

About the Author

Angélique de Taddeo

Angélique is Chief of Staff at AB Tasty, where she works closely with leadership on strategic initiatives and cross-functional projects. With a strong interest in product, customer experience, and market trends, she shares insights and perspectives through her articles.