David Mannheim explains a remastered approach to personalization for long-term customer loyalty

With over 15 years of experience in digital businesses, David Mannheim has helped many companies, such as ASOS, Sports Direct and Boots to improve and personalize their digital experience and conversion strategy. He was also the founder of one of the UK’s largest independent conversion optimization consultancies – User Conversion.

With his experience as an advisor helping e-commerce businesses to innovate and iterate personalization and creativity at speed, David has recently published his own book where he tackles the “Person in Personalisation”, why he believes personalization has lost its purpose and what to do about it. David is currently building a solution to tackle this epidemic with his new platform; Made With Intent – a product that helps retailers understand the intent and mindset of their audience, not just their behaviors or what page they’re on.

AB Tasty’s VP Marketing Marylin Montoya spoke with David about the current state of personalization and the importance of going back to the basics and focusing on putting the person back in personalization. He also highlights the need for brands to build a relationship with customers based on trust and loyalty, particularly in the digital sphere instead of focusing on immediate gratification.

Here are some key takeaways from their conversation.

Personalization is about being personal

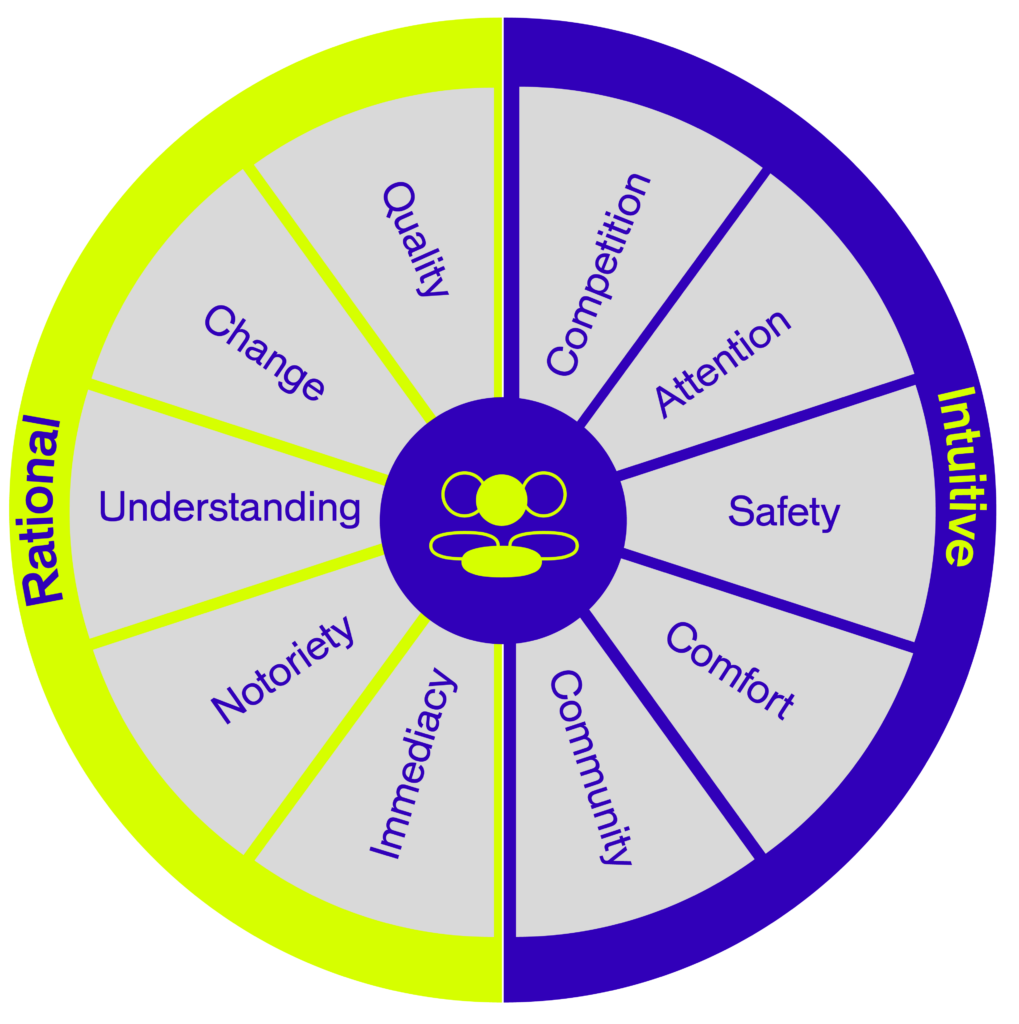

David stresses the importance of not forgetting the first three syllables at the beginning of personalization. In other words, it’s imperative to remember that personalization is about being personal and putting the person at the heart of everything- it’s all about customer-centricity.

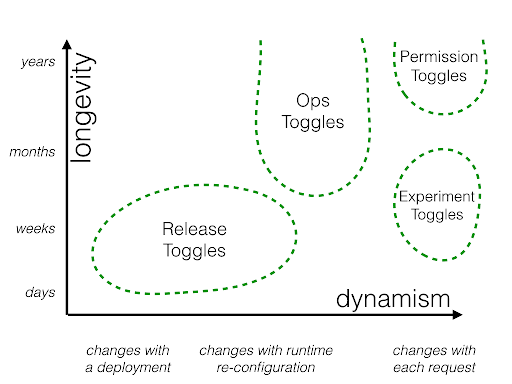

For David, personalization nowadays has become too commercialized and too focused on immediate gratification. Instead, the focus should be on metrics such as customer lifetime value and loyalty. Personalization should be a strategic value add rather than a tactical add-on used solely to drive short-term sales and growth.

“If we move our metrics to focus more on the long-term metrics of customer satisfaction, more quality than quantity, more about customer lifetime value and loyalty as well as recognizing the intangibles, not just the tangibles, I think that puts brands in a much better place.”

He further argues that there is a sort of frustration point when it comes to the topic of personalization and who actually does it well. This frustration was clear after David interviewed 153 experts for his book, most of whom struggled to answer the question of “who does personalization well” and found it difficult to name any brands outside of the typical “big players” such as Netflix and Amazon.

This frustration, David believes, stems from the difficulty of replicating an in-store experience in a human-to-screen relationship. Nonetheless, when customers are loyal to a brand, that same loyalty should be reciprocated from the brand side as well to make a customer feel they’re more than just a number. The idea is to achieve a sort of familiarity and acknowledgment with the customer and create a genuine, authentic relationship with them. This is the key to unlocking customer-centricity.

It’s about offering a personalized experience that focuses on adding value for each individual customer, rather than exploiting value where only customers end up with a commercialized experience geared towards driving growth for the company itself.

Disparity between brands’ and customers’ perceptions of personalization

Citing Salethru’s Personalization Index, David refers to a particular finding in their yearly report where 71% of brands think they excel in personalization but only 34% of customers actually agree with that.

In that sense, there’s a mismatch between customers’ expectations and brands’ own expectations of what is competent customer service.

He refers to recommendations as one example that brands primarily incorporate into their personalization strategy. However, he believes recommendations only address the awareness part of the AIDA model (Awareness, Intent, Desire and Action).

“Product discovery for me is only one piece of a puzzle. If you take personalization back to what it’s designed to be, to be personal, well, where is the familiarity? Where’s the acknowledgment? Where’s the connection? Where’s the conversation?” David argues.

What’s missing is a core, intangible ingredient that helps create a relationship between two individuals, in this case, a human and a brand. Because brands have difficulty pinpointing what that is, they choose instead to base their personalization strategy on something more tangible and visible – recommendations.

For brands, the recommendations narrative is fully immersed within customer expectations and so encompasses the idea of personalization, particularly as that’s the approach that the “bigger” brands have adopted when it comes to personalizing the user experience.

“It becomes an expectation. I go on X website so I expect the bare minimum which is to see things that are relevant to what I search for or the things that I’m interested in…..This is what people associate personalization to,” David says.

Recommendations are an essential first step of personalization but David argues the future of personalization requires brands to go even further.

Brands should focus on building trust

In order for brands to build that sense of familiarity and truly become more personal with customers, brands need to take personalization to the next stage beyond awareness. For example, customers should be able to trust that a brand is recommending to them what they actually need rather than what makes the most profit.

David believes that the concept of trust is missing in a human-to-screen relationship, which is what’s hindering brands from reaching that next level.

In other words, it’s all about transforming the whole approach of personalization along with its purpose to demonstrate greater care with the few rather than “trying to get the many” to establish trust with customers. Brands should shift their focus to care, which David believes is what makes a brand truly customer-centric.

“I think it’s an initiative, if you can call it that, to focus on care. It does make the brand more customer-centric. You’re putting the customer, their experiences and expectations first with the purpose of providing a better experience for them.”

In that sense, two crucial aspects play into the concept of trust, according to David: competence and care.

Brands need to be able to be competent in that customers can trust they’re being recommended the most suitable products for their needs rather than the one that has the higher profit margin; in other words, recommending products that are best for the business instead of the customer. At the same time, brands need to demonstrate care by being more personable with customers to be able to create a connection between brand and consumer.

“The more caring you are, the more you can demonstrate trust,” David says.

Think of banking. Banking demonstrates all the competence in the world, but no care whatsoever. And that therefore destroys their trust. Think of the other way around. Think of your grandma giving you a sweater at Christmas. I’m sure you trust your grandma, but you won’t trust her to buy you a Christmas present, for example.”

For David, context is a prerequisite for trust and the best way to understand user context is through intent, which is where the difference between persuasion and manipulation lies. This is why he has been busy building Made With Intent for the past 8 months focused on that very same concept.

When it comes to recommendations, in particular, it’s essential to contextualize them and understand customer intent. Only then can a brand excel at its recommendation strategy and create a relationship of trust where customers can be confident they’re being recommended products unique to them only.

What else can you learn from our conversation with David Mannheim?

- His take on AI and its role in personalization

- Ways brands can demonstrate care to build trust and familiarity with their consumers

- How brands can shift their personalization approach

About David Mannheim

David has worked in the digital marketing industry for over 15 years and along with founding one of the UK’s largest independent conversion optimization consultancies, he has worked with some of the UK’s biggest retailers to improve and personalize their digital experience and conversion strategy. Today, David has published his own book about personalization and is also building a new platform that helps retailers understand the intent and mindset of their audience, not just their behaviors or what page they’re on.

About 1,000 Experiments Club

The 1,000 Experiments Club is an AB Tasty-produced podcast hosted by Marylin Montoya, VP of Marketing at AB Tasty. Join Marylin and the Marketing team as they sit down with the most knowledgeable experts in the world of experimentation to uncover their insights on what it takes to build and run successful experimentation programs.