From Product Page to Purchase: Where E-commerce Sales Are Really Won

The customer journey can be tricky.

E-commerce brands invest heavily in traffic, but revenue is often either won or lost within a single click. Especially seeing as so many people now use AI to search for products as opposed to a more traditional Google search.

In 2026, e-commerce brands are no longer only competing in Google search results or homepages, but via AI tools, social platforms, and viral moments that drive shoppers directly to product pages.

As traffic from alternative sources like Chat GPT, Tik Tok, Instagram continue to grow exponentially – product listing pages have become an integral point for conversion in the customer journey.

This is exactly why brands need to optimize their pages, from product detail page to checkout, as it’s where friction also remains at its highest.

In turn, small moments of doubt, distraction, or confusion can derail a purchase entirely. This is where optimization steps in to not just about one “fix,” but about identifying multiple opportunities across the funnel.

This article will break down the 10 most practical optimization opportunities to help boost e-commerce sales and how optimization platforms like AB Tasty can help.

Why the PDP-to-Checkout Journey Deserves More Attention

PDPs are where shoppers evaluate value, relevance, and trust, making them especially important in the shopper’s buying journey.

Some of the most important components include cart and checkout, which is where buying intent often gets tested. This is why many brands optimize acquisition more aggressively than mid-and lower-funnel UX.

However, despite this more intensive testing – lost opportunities for revenue still appear in plain sight, such as with:

- Weak Urgency

- Unclear product info

- Poor mobile UX

- Checkout friction

Brands can take one step closer to new levels of success when they view the journey from PDP to checkout as the gold standard it is in reality.

Opportunity #1: Make Your PDP Do More Than Sit There and Look Pretty

Your product detail page has one job: help shoppers feel confident enough to move forward.

A beautiful PDP is nice, but if it leaves customers hunting for answers – it’s not doing the heavy lifting it needs to successfully convert users.

This is because the best PDPs don’t just show a product, but actually help shoppers decide to take a step closer towards purchasing an item.

This means it’s best for PDPs to have:

- Clear, benefit-led product descriptions

- High-quality imagery

- Useful videos

- Easy-to-scan details like sizing, specifications, materials, or compatibility

In short terms: less mystery and more clarity is key to ensuring users convert at some point in the journey between PDPs to checkout.

This is because when purchase information is easy to find, shoppers spend less time second-guessing and more time progressing to checkout with the items in their cart.

A strong PDP reduces friction before it starts, answers questions before they become objections, and builds confidence before doubt creeps in – all of which gives shoppers the context they need to act on a potential purchase.

As uncertainty is one of the fastest ways to lose momentum in the buying journey, it’s also one of the most important elements to address.

Opportunity #2: Put Trust Signals Where Doubt Likes to Linger

Trust matters most when shoppers are closest to taking action. That’s why brands should never hide trust signals in places like footers or hidden on separate FAQ pages, as they actually perform best when they pop-up in the exact moments when doubt is most likely to appear.

Things like reviews, ratings, delivery information, returns policies, payment reassurance, and guarantees all help reduce potentially mis-perceived risk. However, it’s important to remember that having them on your brand’s website is not enough – but where is just as crucial, as placement and visibility matter.

For example, a well-positioned review module near the CTA or clear return messaging on the PDP can do far more than a generic trust badge tucked away at the bottom of the page.

The goal is simple: make shoppers feel safe saying yes to your product or service. When brands proactively answer concerns around quality, delivery, payment, or returns – they can successfully turn anxiety into increased purchase confidence.

Trust is not a background element, but a conversion tool that works best when it appears right on cue.

Opportunity #3: Turn Product Discovery Into Product Confidence

It’s one thing to get a customer to join the page, and another to get them to convert.

When a shopper reaches a PDP, they haven’t made up their mind yet. They’re still exploring, comparing, and weighing options. That’s your cue to guide them instead of pushing them to add to cart right away.

Smart recommendation blocks accomplish this exact goal. Related products, complementary items, “frequently bought together” suggestions, and recently viewed items all work together to keep discovery alive. They help shoppers refine their choices, expand their basket naturally, and feel like the experience was built for them.

Personalization makes it even more powerful. When suggestions are shaped by real browsing behavior and intent, they stop feeling like noise and start feeling like the answers to what they’re looking for.

The result? Shoppers who explore more, hesitate less, and add more to their cart. This is why better product discovery doesn’t just support conversion, but can actually move average order value in the right direction, too.

There may only be one page, but there are endless possibilities. Let’s make every scroll count, together.

Opportunity #4: Make Add-to-Cart Feel Like a Natural Next Step

The add-to-cart button is one of the most important moments on any PDP – but it remains a frequently overlooked step.

If shoppers have to hunt for what they want, second-guess it, or wonder what happens after they click – you’ve already lost momentum during prime-time interest. The goal is to make that step feel obvious, easy and almost imminent towards purchase.

That means testing more than you think. This could include even the smallest of changes, like testing different CTA wording like “Add to Bag” vs. “Get It Now” vs. “Reserve Yours” – as these can all land differently depending on your audience. Another example is test button placement, especially on mobile, where a less-visible CTA can be the difference between a tap towards “add to cart” and a scroll-away. Lastly, adding urgency signals, like low stock alerts, popularity indicators, and delivery windows close enough to the button to matter – can all make a difference.

And don’t forget what comes after the click. Brands should aim to provide users with a clear confirmation of purchase, a smooth transition to the cart, and a reassuring next step. These small moments reduce hesitation and make commitment feel lighter.

Less friction. More forward motion. That’s the test worth running.

Opportunity #5: Don’t Let the Cart Become a Waiting Room

Shoppers who reach the cart are close, but “close” isn’t “converted.”

The cart is still a place where purchase momentum can grind to a halt, and where a few smart optimizations can make all the difference.

The key is to start with clarity. Brands should ask themselves: can shoppers see exactly what they’re buying – from product name, image, size, quantity — without squinting or scrolling? If not, that’s your first best test, as the shopper’s confidence drops fast when details feel unclear.

Next, it’s important to look at the friction points hiding in plain sight:

- Shipping estimates: Show them early on, as surprise costs at checkout remain as the leading reason why carts get abandoned.

- Promo code fields: Placement matters. A poorly positioned field can send shoppers off-site hunting for a discount they may never come back from.

- Upsell blocks: Relevant suggestions can lift average order value, but only if they feel helpful. Avoiding irrelevant timing and aiming for proper placement count more than you think.

- CTA clarity: One clear path forward. No competing buttons, no confusing hierarchy.

The cart should reinforce the decision to buy – not create new reasons to pause. Keep it clean, keep it confident, and keep shoppers moving toward checkout.

Every element is a test waiting to happen. Taking one step closer to success means running them.

Opportunity #6: Make Checkout Feel Shorter Than It Is

When heading to checkout, no one wants potential pop-ups or friction that makes the process more time consuming or less smooth – as both of these cases could lead to cart abandonment.

Checkout friction remains one of the most common causes of lost revenue. To avoid this, it’s important that brands aim to simplify forms, reduce fields, and remove unnecessary steps.

The overview cards below will review potential tests that websites could perform to reduce friction during the checkout process:

Single-Page vs Multi-Step Checkout

Test whether shoppers convert better with one streamlined checkout page or a guided multi-step flow that breaks the process into smaller actions.

Guest Checkout Visibility

Make guest checkout easy to find so users who do not want to create an account can move forward without unnecessary friction.

Autofill Options

Reduce manual effort by supporting autofill for contact, shipping, billing, and payment fields to help users complete checkout faster.

Error Handling

Use clear, specific error messages that explain what went wrong and how to fix it without forcing users to restart the checkout process.

Progress Indicators

Show users where they are in the checkout journey and how many steps remain to reduce uncertainty and encourage completion.

Reassurance Tactics

Build confidence with trust signals such as secure payment messaging, return policy reminders, delivery details, and customer support access.

The main goal of this step is to focus on making checkout feel manageable and intuitive, as a smoother checkout experience can have a substantial, direct impact on conversions.

Opportunity #7: Bring Reassurance Into the Final Stretch

Shoppers often feel the most anxiety right before payment, as it’s when sensitive details like inputting payment information or spending money finally come to light.

That’s why it’s important to reinforce confidence with mechanisms like secure payment messaging, easy returns, clarity on delivery options, length of shipping, flexible payment methods, and customer support options.

Emotional friction can be just as important as UX friction.

Remember, even a technically smooth experience can still leave users feeling uncertain, overwhelmed, or hesitant. A checkout flow may load quickly and function perfectly, but it doesn’t support a user to feel confident about pricing, flexibility, or trustworthiness.

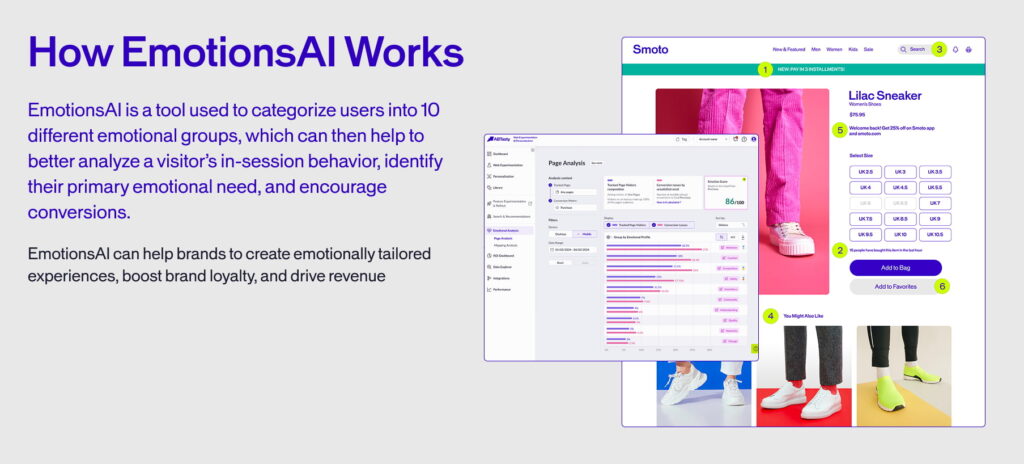

This is why brands should seek to test the kind of reassurance that works best for your audience. At AB Tasty, we can help brands to do that with tools like EmotionsAI – which uses 10 different emotional segments to categorize users based on their specific emotional needs.

Opportunity #8: Use Personalization to Keep the Journey Relevant

In the same way that all people are unique and respond to different messages, no shopper shows up to your site the same way.

A first-time visitor browsing a product page has different expectations than a loyal customer returning to reorder. Treating them identically is a missed opportunity – and is often the reason why they end up leaving.

Tactics like personalization allow your brand to adapt the journey to match where the user actually is such as their intent, behavior, and context for arriving at the site in the first place.

That means showing the right product recommendations at the right moment, not just the most popular ones. It requires adjusting urgency messaging based on what’s in someone’s cart instead of mass-blasting the same countdown timer to everyone. It means surfacing loyalty prompts for customers who’ve earned them, and welcoming new visitors with content that builds trust instead.

When implemented and executed well, personalization can remove these potential roadblocks and make the path to purchase feel natural – as if the experience was built for that exact person. That’s what keeps shoppers moving forward, from PDP to cart to checkout, without second-guessing themselves.

In the same way of “working smarter and not harder”, the goal isn’t to show more – but to show what’s relevant.

Opportunity #9: Test Your Way to Higher AOV, Not Just Higher Conversion

More orders is a great goal, but creating more value per order is even better.

Conversion rate gets a lot of attention – and rightly so. But if you’re only optimizing for more checkouts, you’re leaving revenue on the table. Average order value (AOV) is just as powerful a tool, and yet – it’s often undertested.

Think about the moments where a shopper could naturally spend more: a well-timed bundle on the PDP, a cross-sell in the cart, and free shipping could all help a user push past the threshold and make the most of their purchase.

These aren’t tricks or consumer traps, but helpful nudges in the direction of conversion when they’re relevant and well-placed.

Remember, relevance and timing are everything. An upsell that feels forced can create doubt. An offer that appears too early interrupts the flow. That’s why testing matters here as much as anywhere else.

Some ideas of what brands could do include:

Bundle Configurations

Try different bundle configurations to understand which product combinations feel most relevant, valuable, and conversion-friendly for shoppers.

Cross-Sell Placement

Experiment with where cross-sells appear and how they are framed, from product pages to carts, checkout flows, and post-purchase moments.

Free Shipping Threshold

Test the free shipping threshold that moves the needle on average order value without creating friction or hurting overall conversion.

Your brand is on its own unique journey. Let the data tell you what actually works for your customers: not what worked for someone else’s.

Small shifts in AOV, scaled across thousands of orders, can compound faster than you think.

Opportunity #10: Stop Guessing & Start Experimenting Across the Whole Journey

Every PDP-to-checkout journey has its own friction points. What slows shoppers down on one site might not matter at all on another. That’s why best practices are a starting point – not a guarantee.

The only way to know what actually works for your customers is to test it.

A/B testing and multivariate testing let brands validate ideas before committing to them. They give you confidence that a change is genuinely improving performance – not just looking like a good idea or performing well in a demo. And when tests don’t land the way you expected, that’s not failure – but learning.

Testing, trying, and iterating is the fastest way to understand your customers better.

The most effective teams don’t just test individual pages in isolation. They experiment across the full journey: from how a product is presented on the PDP, to how the cart communicates value, to how checkout handles trust and friction.

By measuring both conversion impact and downstream business value: revenue per visitor, return rates, repeat purchase behavior – brands can successfully discover what works best for all types of customers.

How AB Tasty Helps E-commerce Brands Turn Journey Friction Into Sales Growth

Every opportunity we’ve covered in this article, from PDP clarity to checkout confidence, comes down to the same thing: understanding what your customers need at each step, and making use of the right tools to act on it.

That’s where AB Tasty comes in.

We help e-commerce brands optimize the moments that matter most, from product discovery through to purchase. Through experimentation, personalization, feature management, and AI-powered recommendations, your teams can test PDP layouts, refine cart flows, improve checkout experiences, and validate recommendation strategies without slowing down to wait on development cycles.

At AB Tasty, we take pride in helping you to learn fast. Every test, personalized experience, and feature rollout is an opportunity to better understand your customers and make smarter decisions for your next test.

Whether you’re a marketing team running on-site campaigns, a product team iterating on the checkout flow, or an engineering team managing progressive feature releases – AB Tasty can provide it all in a one-stop-shop format.

Better e-commerce experiences aren’t built in a single sprint, but maintained through consistent iteration, brave testing, and a team that’s daring to go further.

We’re here for every step of that journey. Let’s build something better, together.

The Bottom Line: Better Journeys Build Better Sales

The path from PDP to checkout is filled with optimization opportunities especially when businesses partner to make progress with the right optimization platform like AB Tasty.

Brands that reduce friction, improve relevance, and build confidence can unlock stronger sales performance. Revenue growth doesn’t just come from increasing traffic, but breaking down the customer journey to improve it step-by-step.

Ready to see what kind of growth is possible when we put teamwork to the test and make the dream work for you?

FAQs

Still have questions about optimizing between product listing pages and checkout? Here are the answers you need.

What is a PDP?

A Product Detail Page (PDP) refers to the page on an e-commerce website that gives detailed information about a specific product, such as images, pricing, descriptions, and reviews. The main goal of a PDP is to help shoppers decide whether to buy the product.

What is the difference between a PDP and a PLP?

A Product Listing Page (PLP) refers to the listing page of products. For instance, if you click on sneakers – you’ll see a collection of those products within a category or search result. A PDP, on the other hand, focuses on one specific product. PLPs help users browse and compare items, whereas PDPs drive the final purchase decision.

Why is a PDP important?

A PDP is important due to its direct impact on conversion rates by giving customers the information and confidence they need to make a purchase. A strong PDP can also reduce returns, improve trust, and increase average order value.

What can I optimize with a PDP?

With a PDP, you can optimize various elements such as product titles, images, descriptions, SEO keywords, pricing visibility, reviews, and calls-to-action. You can also improve page speed, mobile usability, upselling opportunities, and conversion performance through testing and analytics.

About the Author

Stephanie Safdie

Stephanie Safdie holds a bachelor’s degree in English Language and Literature from the University of Maryland, specializing in multimedia studies. She has worked as a social media video creator, freelance copywriter, SEO copywriter at Greenly climate tech, and runs a travel blog Destination Dreamer Diaries.