Have you been dreaming of an email marketing campaign to generate more revenue? If so, you’ve come to the right place.

Whether you’re in B2B or B2C marketing, it’s no secret that email marketing is a super effective way to communicate with your customers on your terms.

In fact, according to EMarketer, 80% of retail professionals quote email marketing as their greatest driver of customer retention.

However, email marketing has evolved so much over the years.

In order to connect with your customers, increase sales, onboard customers, move buyers down the purchasing funnel, or achieve other goals, you have to get personal.

Consumers want personalized content; therefore, they’re likely to react better to all personalized forms of communication – specifically, email remarketing campaigns.

In other words, email remarketing campaigns are a great resource for you to connect with your consumer and generate more revenue.

Email remarketing defined

Email remarketing consists of capturing and using information about your customers in order to achieve better marketing results through personalized email marketing campaigns.

When a visitor browses a website, marketers can access navigation information using a browser cookie. A browser cookie is a small file that tracks behavior and actions for each visit.

Similar to retargeted ads, email retargeting campaigns use behavioral and action-based information to help tailor personalized email campaigns. However, email retargeting can also be used to generate retargeted ads on social media and display networks.

Now let’s discuss why you should use email remarketing.

Why you should start email remarketing

Email remarketing campaigns allow marketers to produce highly targeted, highly converting campaigns.

Because they work on the same principles found in retargeted ads, email remarketing can achieve better marketing results compared to traditional digital advertising like Facebook Ads and Google AdWords campaigns.

Let’s see what email remarketing can do.

1. Re-engage your customers

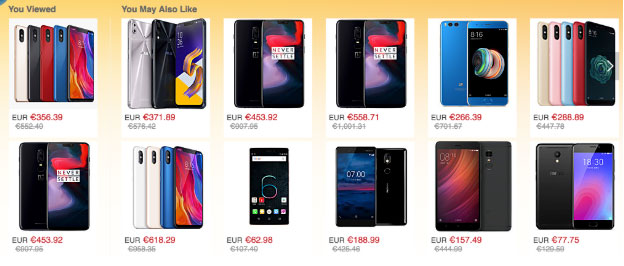

Let’s take a look at a typical situation: Most visitors visit one or two product pages before leaving your website altogether.

So, how can you re-engage these visitors?

Email remarketing can use tracked information to display relevant ads in emails. You can re-engage visitors by showing them special offers related to the product they just saw.

If used wisely, email retargeting helps your company re-engage inactive customers and increase customer retention among active users.

2. Achieve better clickthrough rates

Email remarketing allows for personalized and relevant ads.

According to data collected by SuperOffice, emails that are segmented, or targeted to a specific group of people, perform almost 40% better than a general email.

Imagine what you could do with a 40% increase in your open rate.

3. Drive more sales

With increased clickthrough rates and more chances to convert, your retargeted customers are likely to bring in more revenue for your company.

In fact, Hal Open Science reports that email remarketing conversions can help you increase your overall conversions by 10%.

That’s because your campaigns target just the right person at the right time.

4. Reduce shopping cart abandonment

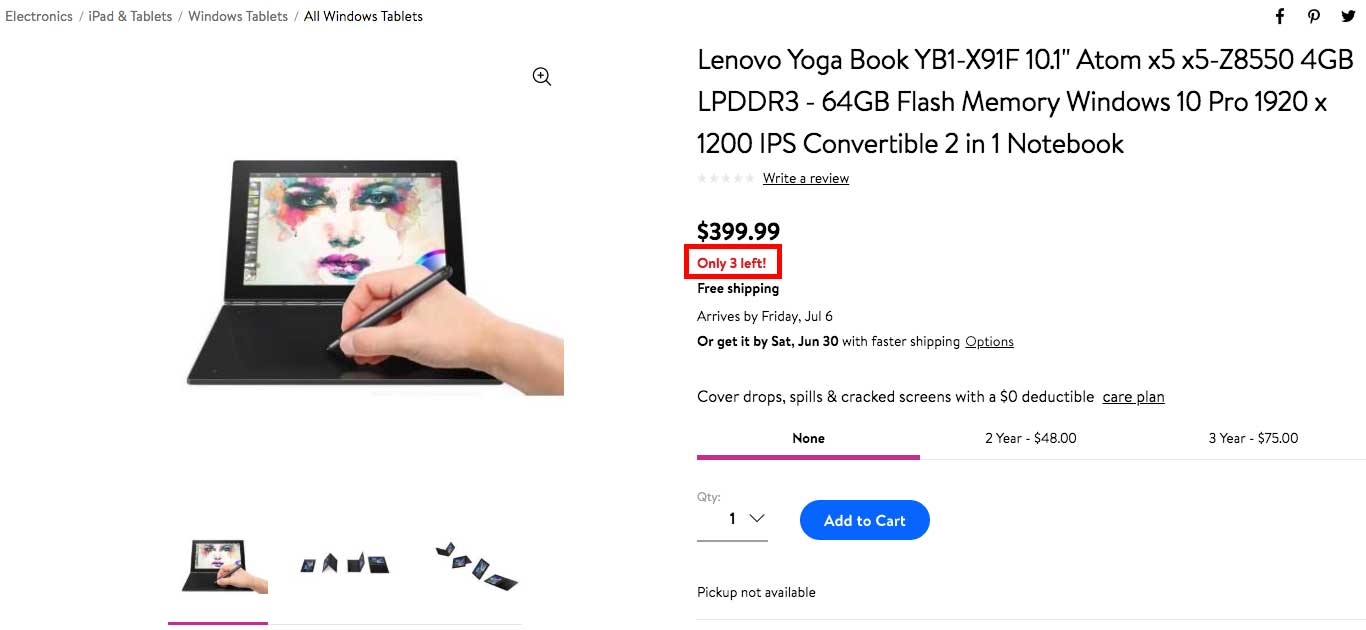

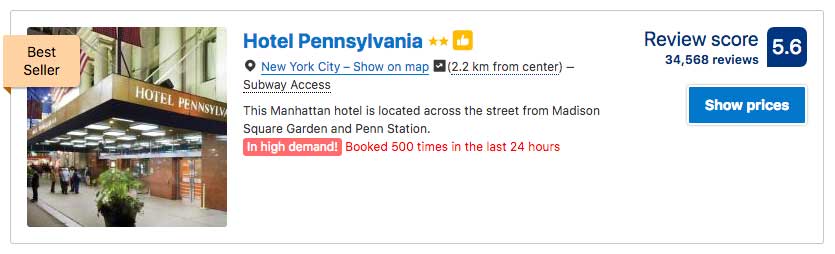

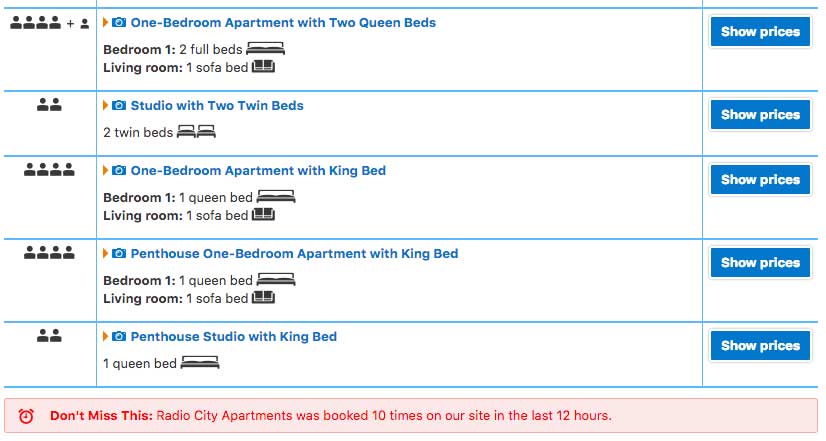

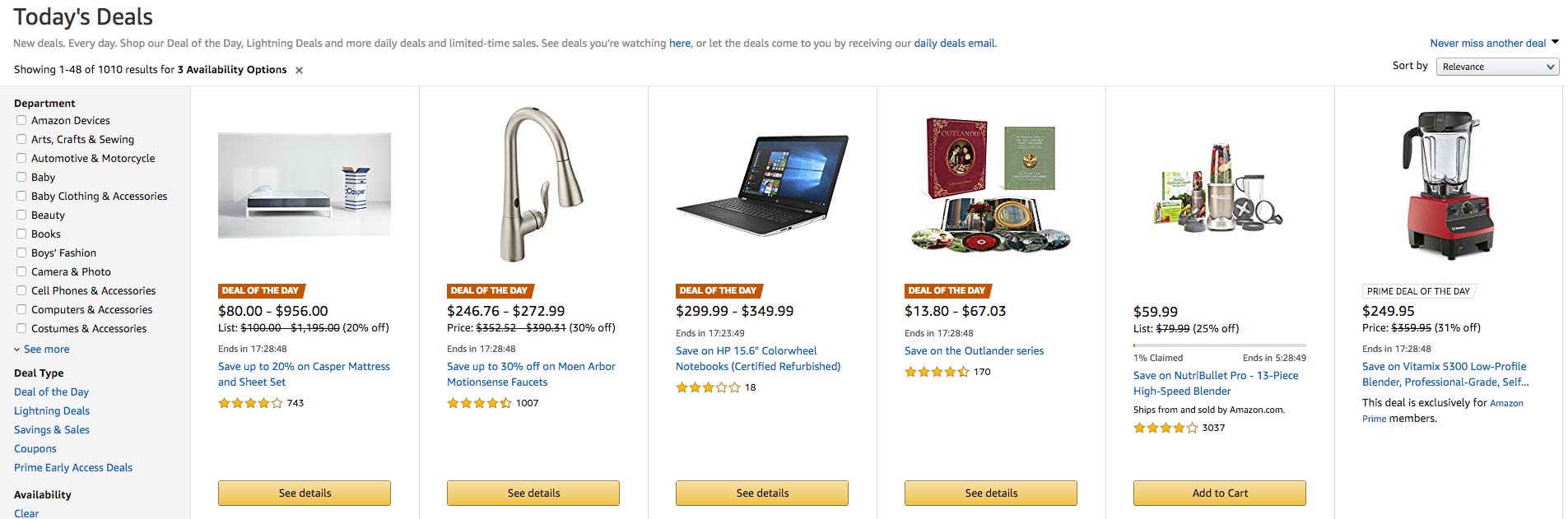

One could say that email remarketing was basically invented to reduce cart abandonment.

According to the Baymard Institute, nearly 70% of shoppers abandon their carts. Email remarketing is a huge opportunity to remind shoppers of what they’ve been browsing and to recover this “lost sale.”

At the same time as you remind your customers about their desired products, email remarketing produces a fear of missing out (FOMO) effect. Your customer will feel light pressure as this might just be their last chance to buy that product at a discounted price.

5 examples of email remarketing campaigns

1. FOODPANDA: FoodTech

FoodPanda knows that hunger cannot wait. In the image above you can see that they retarget with two magical words: “FREE+DELIVERY”

FoodPanda knows that hunger cannot wait. In the image above you can see that they retarget with two magical words: “FREE+DELIVERY”

A simple free delivery offer could be all it takes to convince your customer to try a new restaurant that they’re already been looking at.

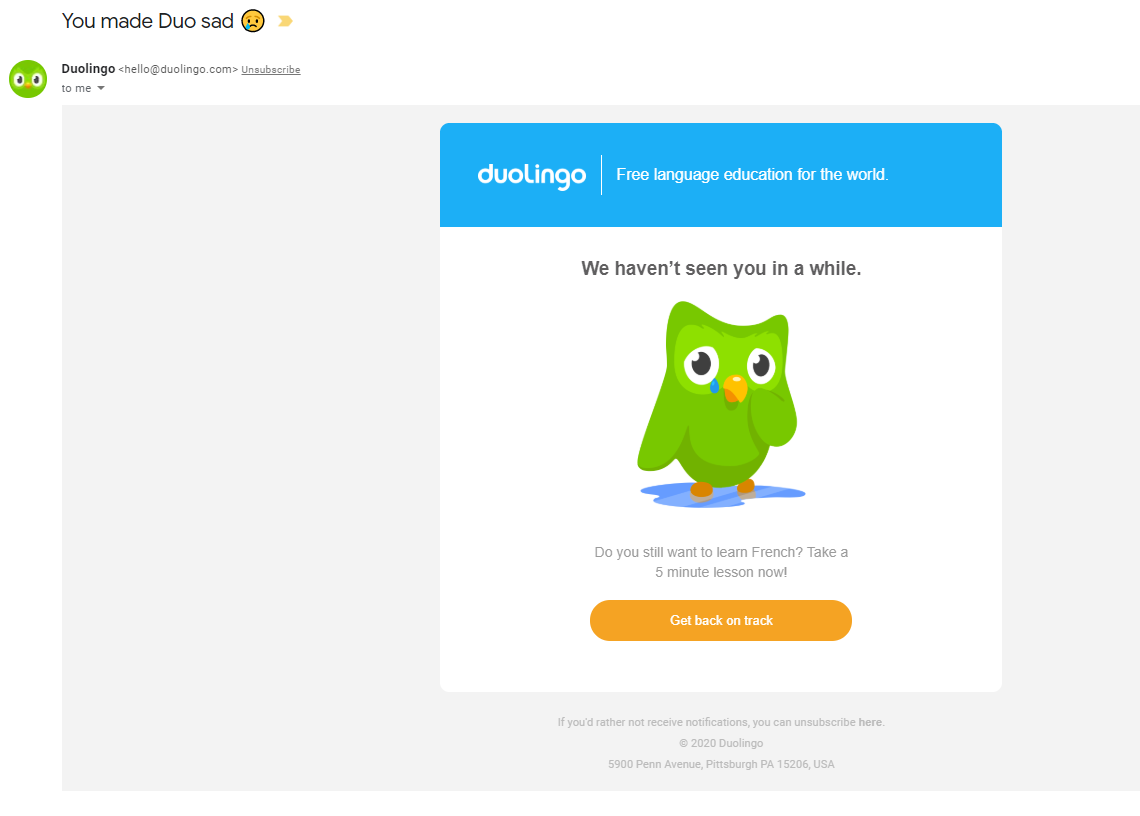

2. DUOLINGO: EducationalTech

Duolingo, a language-learning app, applies a different approach in its remarketing campaign: emotion.

If you haven’t used the app recently, they let you know that you haven’t been seen in a while and that it’s time to get back on track with your learning.

They even take it to another level by mentioning that you’ve made Duo the owl, the face of their app, sad because of your absence.

This is a great way to apply human emotion to a remarketing campaign to re-engage users.

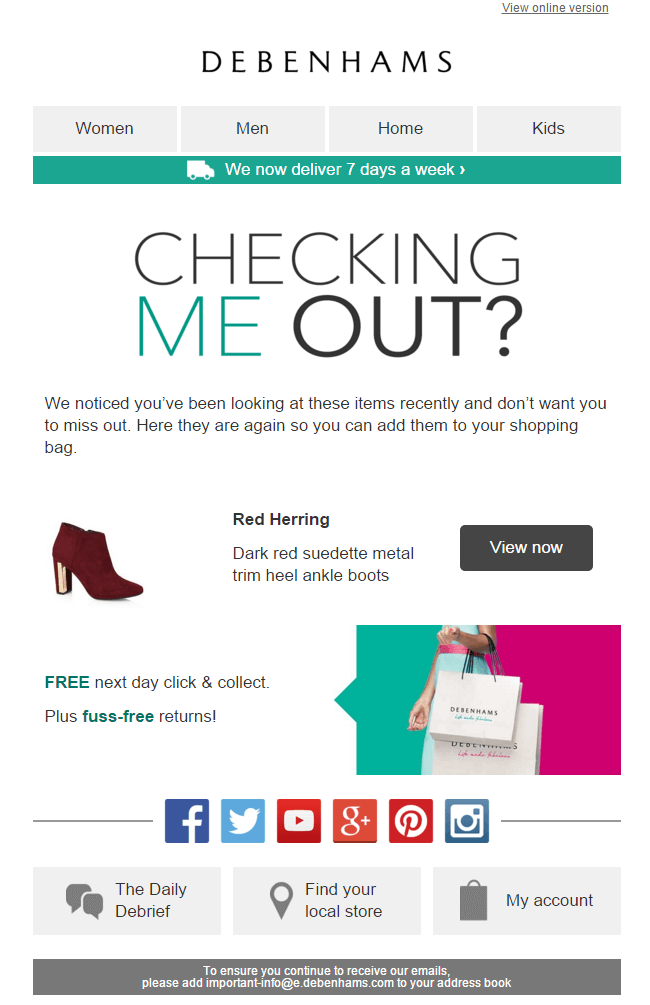

3. DEBENHAMS: Fashion

In Debenhams’ email remarketing campaign, they point out items a customer was browsing but that they haven’t added to their cart.

This email also includes enticing CTAs for buyers: FREE next-day click & collect and fuss-free returns. What more could you ask for?

Interestingly enough, this email doesn’t mention the customer’s name, but it still feels personal as it is targeted directly at customers viewing the product.

4. NIKE: Sportswear

In a similar fashion, Nike triggers a retargeted email after you’ve left some items in your cart.

While they don’t display your abandoned items, they insist on having you talk with a sales representative over the phone or through their online chat.

Finally, they also heavily highlight their “FREE SHIPPING – FREE RETURNS” policy in order to convince undecided customers.

This is especially important to highlight considering that shipping cost is one of the main reasons for cart abandonment.

5. FRESHBOOOKS: Saas

Want to retarget your own customers to upgrade to a new plan? Take a look at FreshBooks’ email campaign as an example.

With 19 days left in a free trial, they offer 60% off any plan for your upgrade. This not only entices the user with a discount but also reminds them that this offer is time sensitive according to how much of their free trial they have used.

Email retargeting best practices

Now that you have a few examples to start your retargeting campaigns, here are some best practices to keep in mind while you set them up.

- Timing is everything: The sooner you can start your campaigns, the better. If you contact website visitors shortly after they’ve clicked off your page, they’ll be more likely to return and reconsider your products/services.

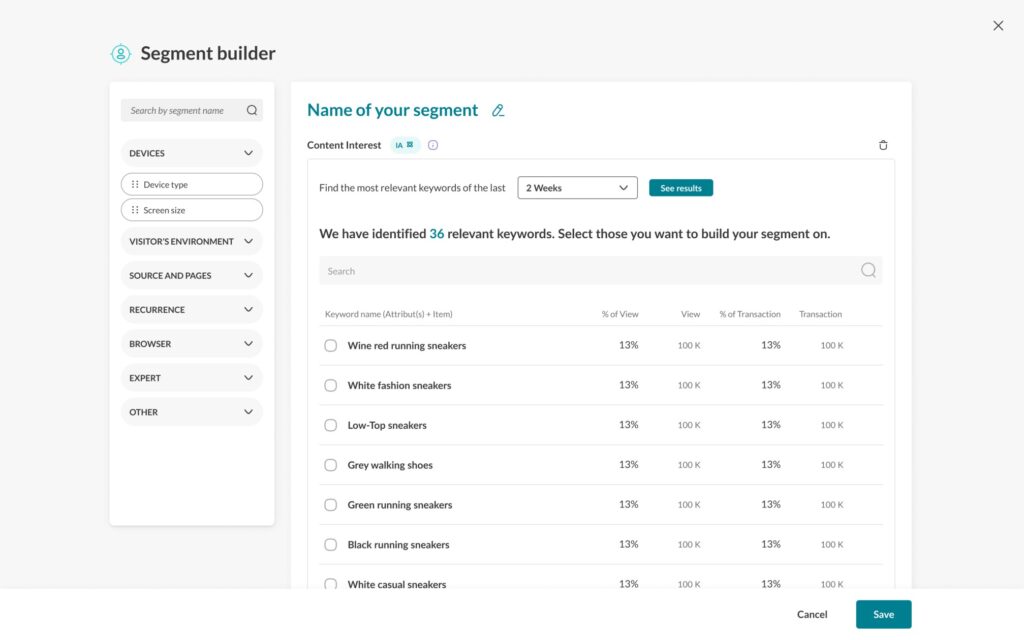

- Keep it relevant: To be sure you’re targeting the right users, email segmentation is the best way to go. Segmentation is the process of separating the subscribers in your email list into smaller groups. This will help you be sure that you’re sending the right emails to the right people.

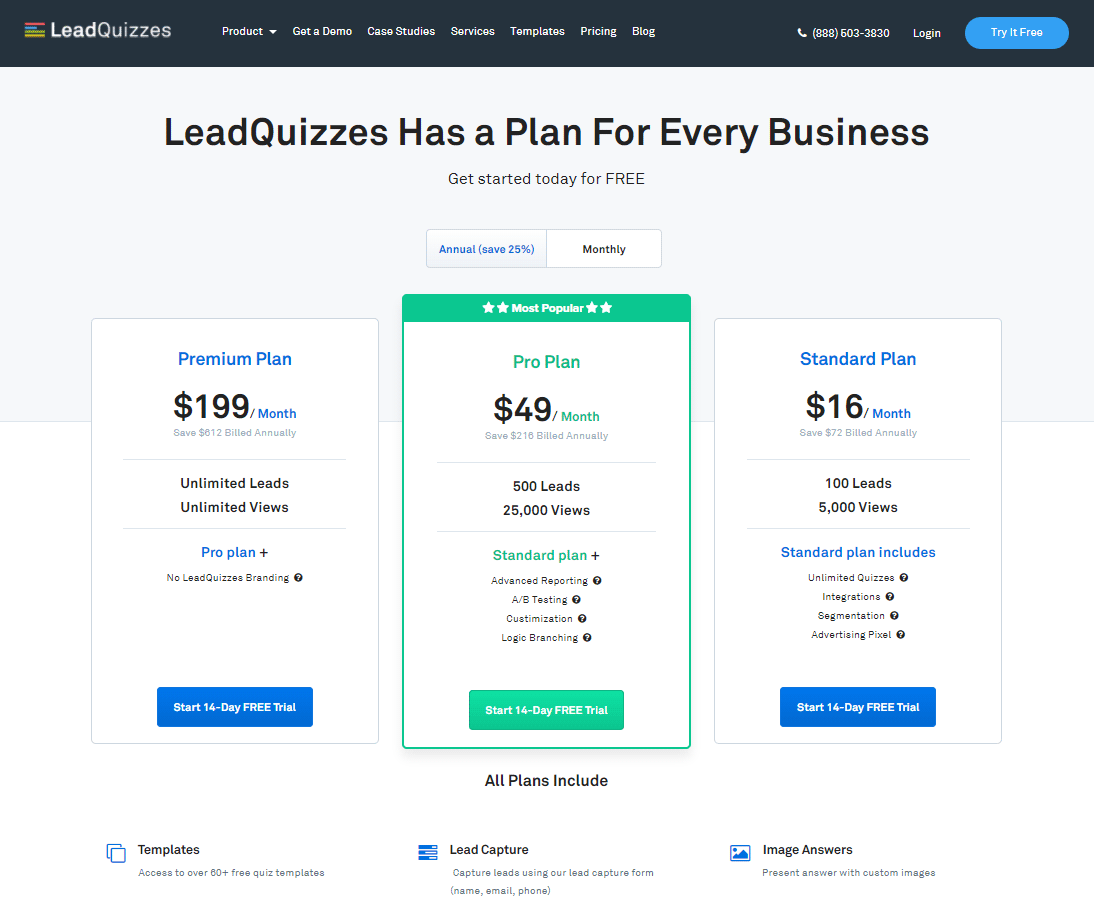

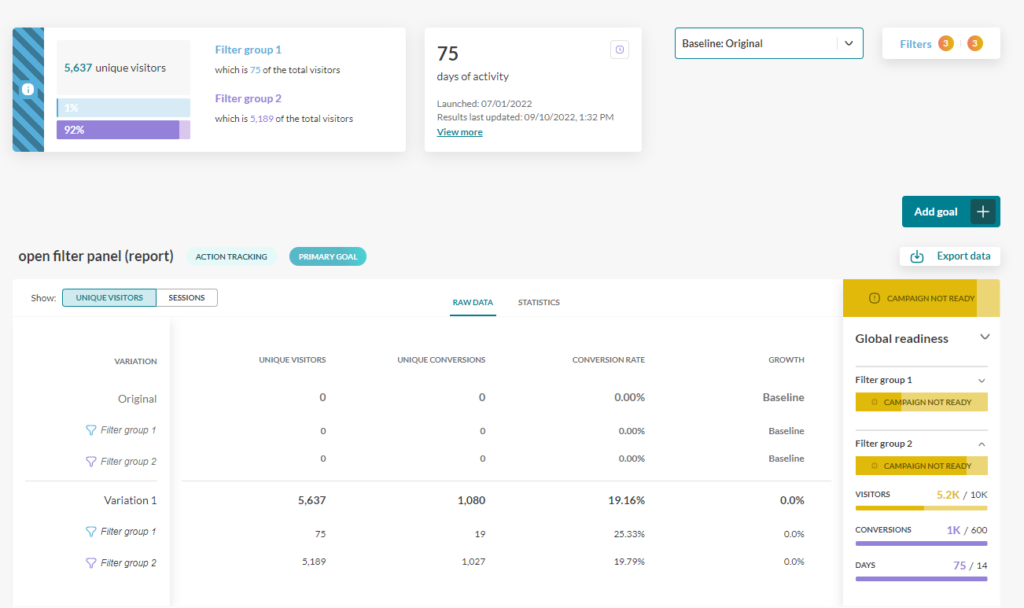

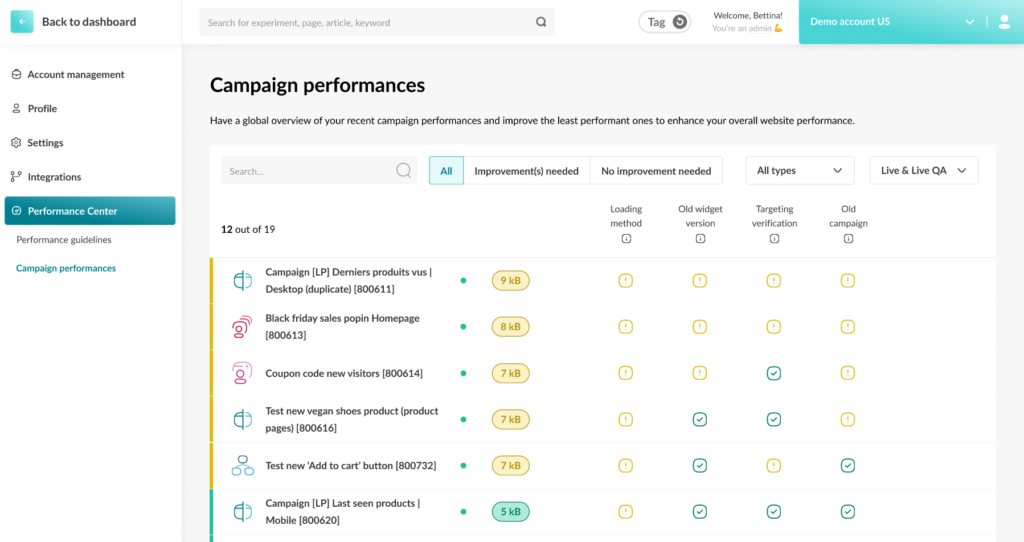

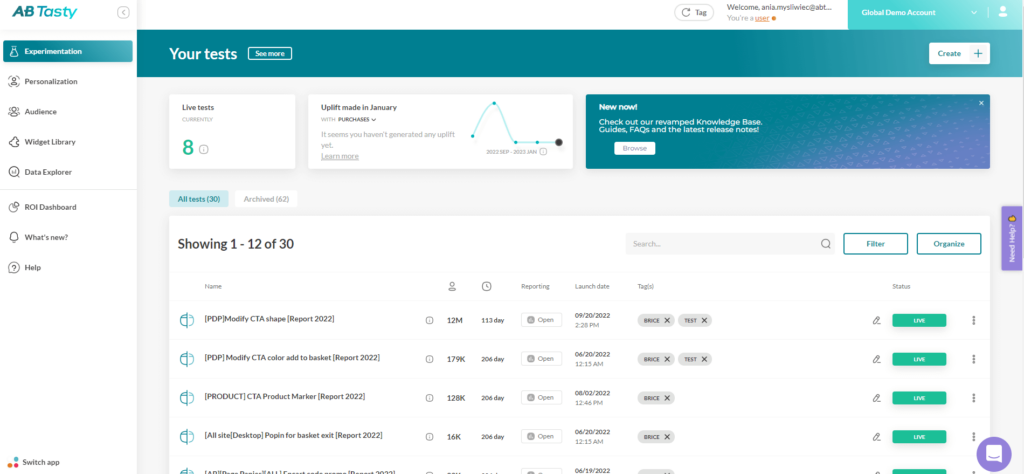

- A/B test your campaigns: An A/B test will compare two versions of your email to test which one produces the best results. After a few tests, your team should start to identify trends and common patterns that lead to higher open and click-through rates.

Whether you’re looking to personalize your email content to capture customer attention or A/B test your subject lines to determine the best-performing phrase, choosing the right software will help you transform your ideas into reality.

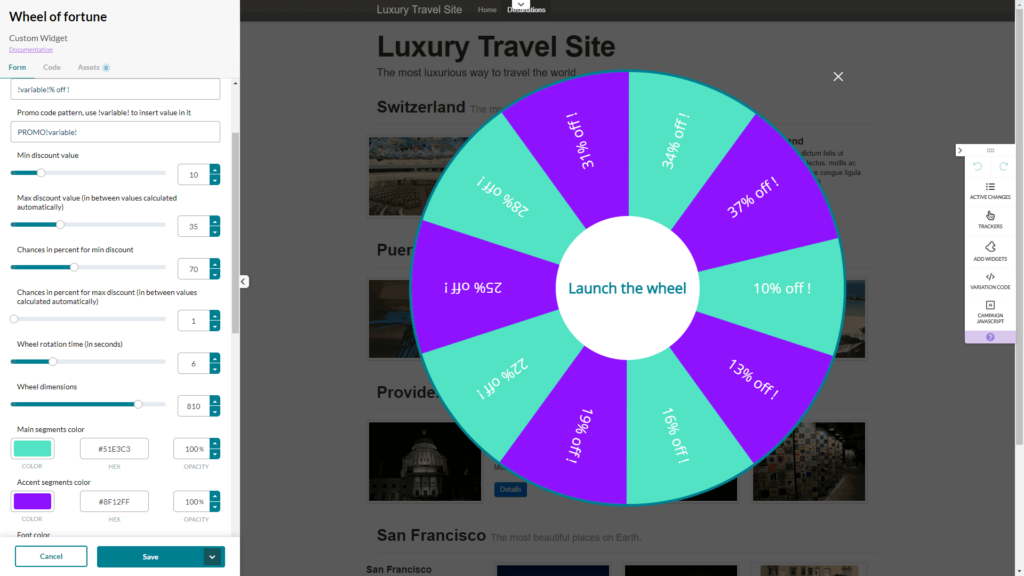

AB Tasty is the complete experience optimization platform to help you create a richer digital experience for your customers — fast. From email remarketing to A/B testing your subject lines, this solution can help you achieve personalization with ease.

Connect with your website visitors

Whether an email remarketing campaign will be a new tactic for your team or you’re looking for some best practices to employ, these campaign examples will change the way you communicate with your consumers.

Relevant and personalized content sent at just the right time is key to generating more revenue with your email campaigns.